The majority of climate scientists think that global temperatures have risen over the past century mostly because of human activity. However, there are some climate scientists who think that the small changes we have seen in global temperature are mostly the result of natural variations that exist independently of people. Others simply say we don’t have enough information to know how much human activity has played a role in the process. Add to that the unreliability of much of the early data regarding global temperatures, and you end up with a picture that is far more murky than what most media outlets and politicians want you to see.

A recently-published study might help to eventually shed some light on how much human activity affects global temperatures. It comes from four climate scientists in China who are affiliated with The Climate Center of the Zhejiang Meteorologic Bureau, the Earth Science School of Zhejiang University, and the Shanghai Climate Center. They are convinced that the vast majority of the changes we have seen in global temperatures are due to natural variations, and those variations are buffered by the oceans. As a result, they have tried to analyze global temperatures from that perspective.

Since global temperature data sets don’t really agree with one another, they first had to choose which global temperatures they would actually use. They chose the Global Land Surface Temperature Anomaly Index (GLST) as compiled by the NOAA. They then tried to find correlations between those data and the Sea Surface Temperatures (SST) as compiled by the Hadley Climate Center. The correlations they found led them to develop a mathematical equation that would reproduce the GLST data. While the idea of finding a single equation that would fit all the GLST data might seem like an impossible task, it is not. One phrase I often hear from my nuclear chemistry colleagues is, “It only takes four parameters to fit an elephant.” In other words, if you have enough parameters in your equation, you can fit just about anything.

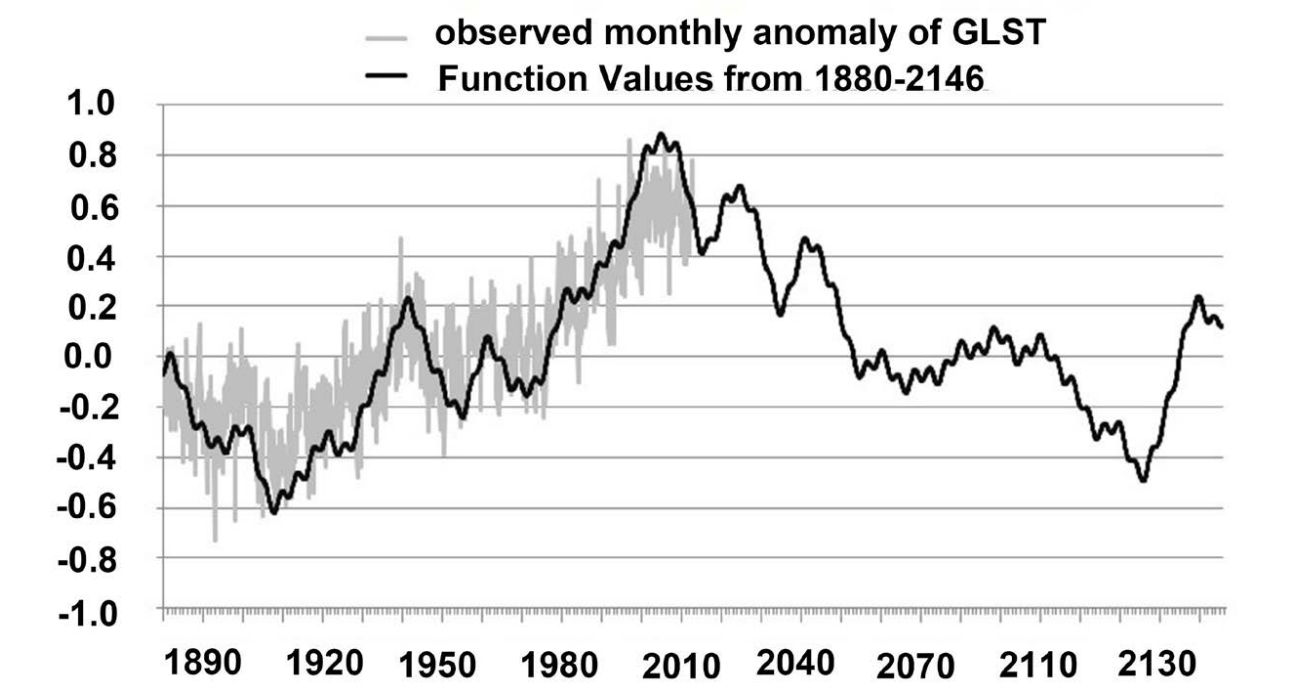

Of course, for something as complex as global temperatures, it takes more than four parameters. In fact, their paper indicates that it took 20. However, with their 20-parameter equation, they were able to reasonably reproduce the global temperature data that they were analyzing. The results can be seen in the image at the top of the post. The jagged, grey line indicates the data, and the smoother, black line indicates the results of their equation. As you can see, it does a pretty good job of fitting the known data.

Does that mean their equation is a good explanation of global temperatures? Not at all. It is simply an equation that has been forced to fit the data. What I find interesting, however, are the temperatures it predicts for the future. According to the equation, the earth has hit its maximum temperature for a while, and over the next 100+ years, the average temperature of the planet will cool. Do I think that prediction is correct? There is no way I can adequately judge that. There are simply too many unknowns in climate science for anyone to make a reliable prediction about what is going to happen in the future. Perhaps we will eventually learn enough about climate science to change that, but right now, the uncertainties simply preclude reasonable predictions.

However, here’s what I will say about this very interesting study: The authors assume that that the vast majority of the temperature variations we have seen are the result of natural processes. If, over the next 30 years, the data continue to fall in line with the predictions of their equation, that will lend more credence to their assumption. If not, that will indicate that either their assumption is wrong, or that some of the natural variations which cause global temperature changes are too long-term to show up in a century’s worth of unreliable temperature data.

Regardless of the outcome, I do think that this paper, while simple in its approach, is a valuable addition to climate science.

Did you ever see that Twilight Zone where the girl has a nightmare that the earth is getting hotter and hotter and then she wakes up only to find its actually getting cooler and cooler?

: )

Yes. It’s brilliant!

I watched a documentary recently about how global warming and global cooling could be happening at the same time. It cited the growing ice mass in antartica but also said the ocean levels is rising. I don’t know how possible that is.

[The rest of the message was removed because it had nothing to do with the post. I contacted the author of the post privately regarding the material I removed. – Jay Wile]

Global warming and global cooling are not possible at the same time. However, local cooling could occur during global warming, and local warming could occur during global cooling. This is because a large change in global temperature can alter weather patterns, changing a once-warm area into a cooler area, or a once-cold area into a warmer area. That’s probably what the documentary was saying. The problem with the differences at the poles is that the standard mechanism of global warming says that the poles should heat up the most. Thus, if global warming were to occur due to carbon dioxide, it should warm both poles. It might warm them at different rates, but it should warm both of them.

I replied to the other stuff in your comment via email, since it was unrelated to global warming.

Not surprising that it wasn’t too high around 1929. Back in the 90’s (I think), I was watching TV with my friend, and we had the news on. The forecaster said that the lowest high temperature for that day all-time that they knew of (for our area. this was in June) was 29 degrees (I think it was in 1929). My friend said that people probably thought that it was the apocalypse or something.

Dr. Wile, I appreciate you publishing this and other articles on the topic. All you hear from the media is “global warming is a fact” and they’ve gotten where they don’t even say how they know anymore or talk about any levels of uncertainty or variation. They’ve abandoned critical thinking on the topic.

Even with 20 parameters it’s not a particularly good fit to the data. Why don’t we just do nothing until it’s too late?

Actually, Chris, the goodness of a fit is not a subjective thing. It is measured by a correlation coefficient, which has a maximum value of 1, indicating a perfect fit. Their correlation coefficient is 0.9034706, which indicates a very good fit. Another way to measure the goodness of fit is with the root mean squared error (RMSE), which has a minimum value of 0, indicating a perfect fit. Their RMSE is 0.03. Either way, it’s a really good fit.

As for doing nothing, it’s fortunate that most people don’t have your attitude. In the US, for example, greenhouse gas emissions per capita have been falling since the year 2000. There are innovative companies developing energy sources that don’t release greenhouse gases, there are automobile companies that have produced low-emission automobiles, and there are incentives for companies to capture and store their carbon instead of releasing it. In addition, a lot of scientific research is being done in an attempt to understand the effects of carbon dioxide on global temperature.

I think you missed the sarcasm there. Of course all those things you mention are only beneficial if we are experiencing anthropogenic global warming.

I hope you are still being sarcastic, Chris. Obviously, any advances in technology can be beneficial in unexpected ways, so even if there is no significant anthropogenic global warming, all the technologies I listed will probably be beneficial. If there is significant anthropogenic global warming, then hopefully the scientific research I mentioned at the end will demonstrate that. Until then, the technologies I mentioned (and others) will continue to develop, and that will help us fight the problem, if it exists.

If the warming is not due to anthropogenic factors, then the listed technologies will have no effect on the natural processes that are actually causing it.(e.g. earths orbital cycles, suns cycles etc) In that case we are better to apply those funds to mitigation of the effects of climate change, such as rising sea level etc.

Better still, apply them to the reduction of world poverty, food security, disease reduction etc.

I would have to disagree with you, Tom. First, it’s possible that if there is little or no anthropogenic global warming, the buildup of carbon dioxide could have other negative consequences that are unforseen or not yet fully appreciated. For example, while I think ocean acidification is probably not a problem, we haven’t studied it enough to make that determination. Thus, it’s possible that those technologies will help with ocean acidification, if that becomes a problem. It may be that too much carbon dioxide will cause too much algae buildup in the ocean or too many green plants on earth. If that’s true, once again, those technologies might be of use.

Second, technology rarely has only one application. Even if there is little or no anthropogenic global warming, development of solar energy could benefit in areas where grid distribution of electricity is not practical. In the same way, biofuel technology might benefit countries that have little in the way of oil and coal reserves but a lot in terms of agriculture. Yes, reduction of world poverty, food security, and disease reduction are important, but we also know that when a country has affordable access to electricity, those problems are not as prevalent. Thus, some of the listed technologies might eventually help those problems, too.

We can’t dump a ridiculous amount of money into something that we don’t know is a problem. We also can’t ignore it, just because we don’t know it’s a problem. We need a reasonable approach to spending our dollars, and unfortunately, I don’t hear a lot of voices arguing for that.

Dr. Wile, since we’re talking about global cooling, what do you say about the incessant reports that the current year is always the hottest on record?

Thanks for asking, Brandon. Those kinds of statements can be interesting, but anyone who thinks they have something to do with human-produced climate change are displaying a shocking ignorance of basic natural history. Consider, for example, the global temperature record over the past 2000 years, as reconstructed by Loehe and others in the Journal of Energy and Environment. Now, of course, there are many different ways to come up with such data, and they all have their problems. However, several lines of evidence indicate that there was an unusually warm period in earth’s history from about 600 AD to 1100 AD (the Medieval Warm Period). Had there been global temperature monitoring back in 860 AD, the headlines would have read “The past 10 years have been the 10 hottest years on record.” While interesting, it says nothing about human-induced climate change, since the world reached “record lows” about 800 years later.

Yes, it’s warmer than usual right now, but that says nothing about climate change or what is causing it.