Biology is a vocabulary-driven science, especially in the introductory classes that are required for high school and early college. In the biology course I co-wrote with Dr. Paul Madtes, for example, students are required to learn more than 15 new vocabulary words every two weeks. More importantly, the later vocabulary words require that they remember the definitions of the earlier vocabulary words, so they must keep all of those definitions in mind throughout the entire course. Needless to say, this can be a serious challenge for some students, especially those who don’t memorize things easily.

Biology is a vocabulary-driven science, especially in the introductory classes that are required for high school and early college. In the biology course I co-wrote with Dr. Paul Madtes, for example, students are required to learn more than 15 new vocabulary words every two weeks. More importantly, the later vocabulary words require that they remember the definitions of the earlier vocabulary words, so they must keep all of those definitions in mind throughout the entire course. Needless to say, this can be a serious challenge for some students, especially those who don’t memorize things easily.

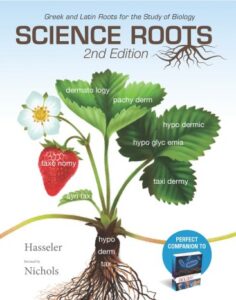

Is there a way to help students with this potentially daunting task? There is. Most science terms have their roots in Latin or ancient Greek, and those roots appear over and over again in different vocabulary words. If you learn one root word, you end up having insight into multiple scientific terms. Consider the Greek root hypo, which means “under” or “below.” It is used in biological terms like hypodermis (under the dermis), hypothermia (below-normal body temperature), hypoglycemic (below-normal blood sugar), and hypotonic (below-normal solute concentration). Now consider the Greek root hyper, which means “over” or “more than.” With that information, you know what hyperthermia, hyperglycemic, and hypertonic mean. Of course, this isn’t perfect, because there is no hyperdermis. Instead, we have an epidermis, which uses the Greek root epi, meaning “on top of” or “over.” Nevertheless, knowledge of specific Latin and Greek words can really help you master biological language. In fact, it can help you better master the English language in general, since many everyday English words use these same roots.

Of course, the best way to do this is to learn Latin and Greek early in life. That’s why schools used to require all their students to learn Latin at a minimum. Nowadays, unfortunately, schools are focused on producing obedient, subservient workers instead of educated citizens. As a result, only private classical schools continue to require Latin of all their students. Nevertheless, you can still make use of Latin and Greek roots, even if you don’t know the languages. That’s the aim of Science Roots by Nancy Paula Hasseler (2nd edition revised by Stephen J. Nichols).

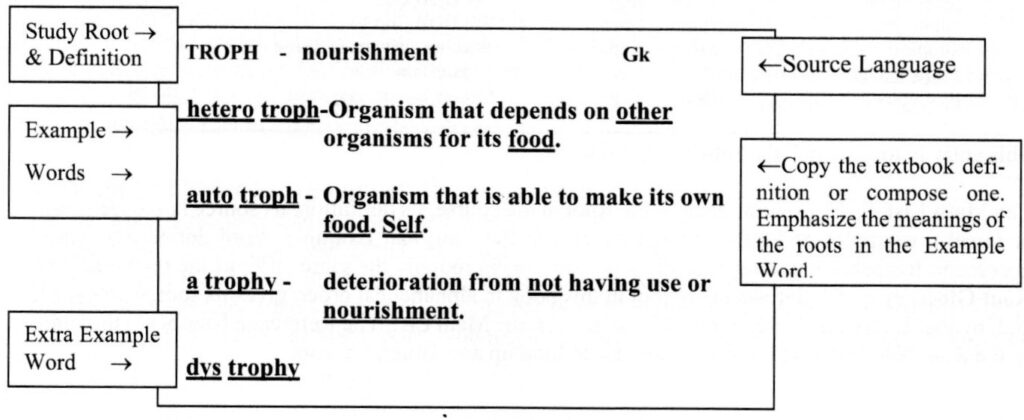

Students who use this book will make study card for each root, its meaning, and its source language (Greek or Latin). Underneath that, the student will write biology vocabulary words that use the root. The words are written emphasizing the root. Here is an example, which comes from the book’s introduction:

How will the student know when to make a card and when to add words to it? Well, since it is being published by the same company that publishes Discovering Design with Biology (the book I co-wrote with Dr. Paul Madtes), Science Roots is designed for the student to make and add to the cards when they come to the vocabulary words in that biology book. I already encourage students to make notecards for each vocabulary word, so using Science Roots doesn’t produce a lot more work for the students. They need to make notecards anyway, so why not make this kind? It will have additional information on it, and more importantly, it will help them see the connections between the words that they are learning.

If students use Science Roots along with (or before) Discovering Design with Biology, they will not only have a deeper understanding of the language of biology, but they will probably also have an easier time learning all those words!

In my opinion, one of the best ways to think deeply about an issue is to read about it from different points of view. Generally, I have to do that by reading many books by different authors on the same topic. In that situation, however, I don’t get to experience any interaction between the authors. That’s what makes a book like

In my opinion, one of the best ways to think deeply about an issue is to read about it from different points of view. Generally, I have to do that by reading many books by different authors on the same topic. In that situation, however, I don’t get to experience any interaction between the authors. That’s what makes a book like  I wrote the first two editions of Exploring Creation With Physical Science, but the publisher used a different author (Vicki Dincher) to make changes for a third edition.

I wrote the first two editions of Exploring Creation With Physical Science, but the publisher used a different author (Vicki Dincher) to make changes for a third edition.

Years ago, I read

Years ago, I read